Do you know which inputs your neural network likes most?

Recent advances in training deep neural networks have led to a whole bunch of impressive machine learning models which are able to tackle a very diverse range of tasks. When you are developing such a model, one of the notable downsides is that it is considered a “black-box” approach in the sense that your model learns from data you feed it, but you don’t really know what is going on inside the model.

Shapeshifting PyTorch

An important consideration in machine learning is the shape of your data and your variables. You are often shifting and transforming data and then combining it. Thus, it is essential to know how to do this and what shortcuts are available.

Let’s start with a tensor with a single dimension:

import torch test = torch.tensor([1,2,3]) test.shape torch.Size([3]) Now assume we have built some machine learning model which takes batches of such single dimensional tensors as input and returns some output.

What are embeddings in machine learning?

Every now and then, you need embeddings when training machine learning models. But what exactly is such an embedding and why do we use it?

Basically, an embedding is used when we want to map some representation into another dimensional space. Doesn’t make things much clearer, does it?

So, let’s consider an example: we want to train a recommender system on a movie database (typical Netflix use case). We have many movies and information about the ratings of users given to movies.

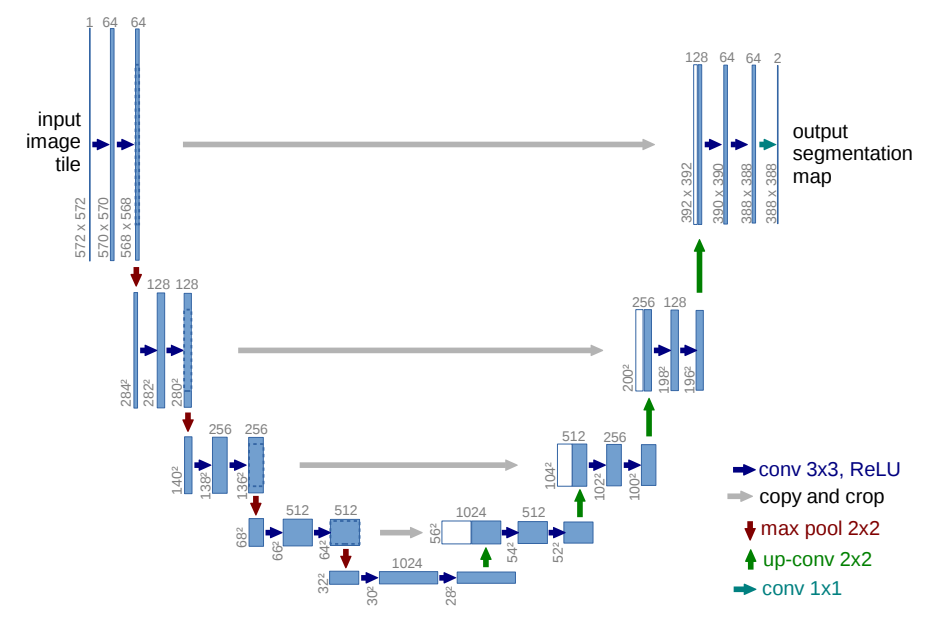

Deep Learning on Medical Images With U-Net

Illustration taken from the U-Net paper

I recently read an interesting paper titled “U-Net: Convolutional Networks for Biomedical Image Segmentation” by Olaf Ronneberger, Philipp Fischer, and Thomas Brox which describes how to handle challenges in image segmentation in biomedical settings which I summarize in this blog post.

Challenges for medical image segmentation A typical task when confronted with medical images is segmentation. That refers to finding out interesting objects in an image.

Play Video Games Using Neural Networks

Deep Q Learning Today, I want to show you how you can use deep Q learning to let an agent learn how to play a game. Deep Q learning is a method which was introduced by DeepMind in their 2015 Nature paper (pdf) to play Atari video games by just observing the pixels of the game. To make it a bit simpler for the case of this blog post, we will use a slightly easier game which is to balance a polestick on a paddle.